The ELIZA Effect: Why Humans Form Emotional Attachments to Simple Code

Welcome to my blog theaihistory.blogspot.com, a comprehensive journey chronicling the evolution of Artificial Intelligence, where we will delve into the definitive timeline of AI that has reshaped our technological landscape. History is not just about the distant past; it is the foundation of our future. Here, we will explore the fascinating milestones of machine intelligence, tracing its roots back to the theoretical brilliance of early algorithms and Alan Turing's groundbreaking concepts that first challenged humanity to ask whether machines could think. As we trace decades of historical breakthroughs, computing's dark ages, and glorious renaissance, we will uncover how those early mathematical dreams paved the way for today's complex neural networks. Join us as we delve into this rich historical tapestry, culminating in the transformative modern era of Generative AI, to truly understand how this revolutionary technology has evolved from mere ideas to systems redefining the world we live in. Happy reading..

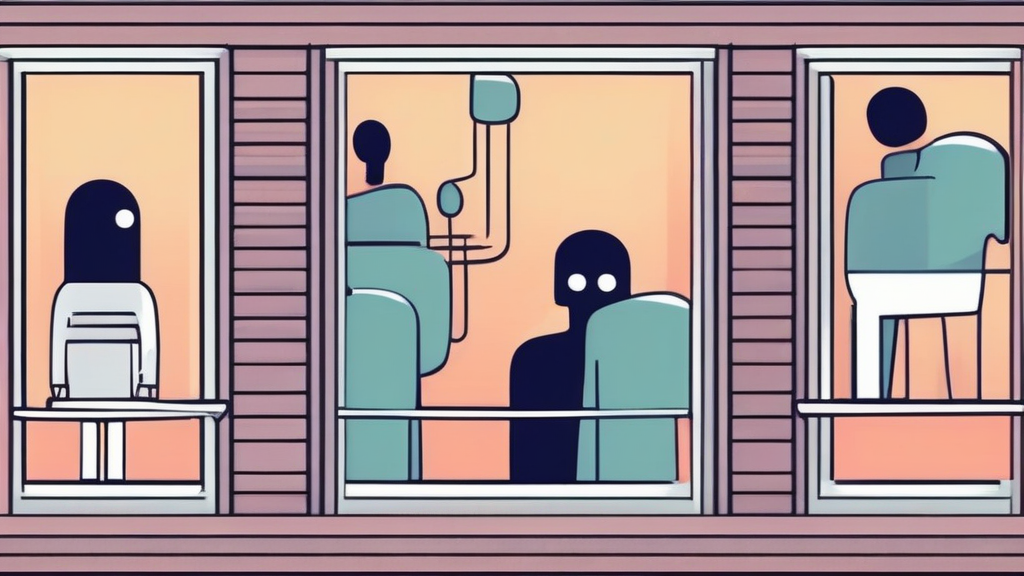

I remember the first time I felt a genuine sense of connection with a machine. It wasn’t a sophisticated AI or a high-end virtual assistant; it was a clunky, text-based script that mirrored my own sentences back at me. It felt surprisingly human, even though I knew, logically, that I was talking to a box of logic gates and punch cards. This isn't just my own quirk—it is a well-documented phenomenon known as the ELIZA effect.

When we meet ELIZA: the 1960s computer program that became the world's first chatbot, we are confronted with a mirror of our own subconscious. Developed at MIT between 1964 and 1966, this program was designed to simulate a Rogerian psychotherapist. It didn't "think" in any modern sense. It simply parsed keywords and rearranged the user's input into questions.

Yet, people poured their hearts out to it. They confided in it. They started to believe that this simple script understood their pain. Why does our brain insist on anthropomorphizing a few lines of code? Let’s pull back the curtain on why we are so prone to projecting humanity onto cold, calculating machines.

The Psychology Behind the ELIZA Effect

We are social creatures by design. Our brains are hardwired to detect agency, intention, and emotion in the world around us. When a computer provides a response that seems to follow the rules of human conversation, our pattern-recognition engines kick into high gear.

The ELIZA effect occurs when we unconsciously assume that a computer system has human-level understanding simply because its output matches the syntax of human communication. It’s a trick of the mind. We project our own meaning onto the machine's hollow responses.

How the ELIZA Effect Shapes Our Reality

Think about your interactions with modern voice assistants or customer service bots. Even when you know you are talking to an algorithm, you might feel a flicker of annoyance when it doesn't "listen" or a sense of gratitude when it solves a problem. We have a tendency to treat these systems as if they have feelings, even though we know better.

This isn't just a nostalgic look at history. It is a fundamental aspect of human-computer interaction. Understanding why we fall for this allows us to be more critical of the tech we use every day. We aren't just users anymore; we are participants in a psychological dance with silicon.

Meet ELIZA: The 1960s Computer Program That Became the World's First Chatbot

Joseph Weizenbaum, the creator of ELIZA, was actually quite horrified by how quickly people became attached to his program. He intended it to be a parody of how easy it was to create a superficial illusion of conversation. He wanted to show that computers were not, and should not be, substitutes for human connection.

Instead, he watched as his secretary asked him to leave the room so she could have a private conversation with the machine. It was a massive wake-up call for the field of artificial intelligence. The program used a technique called "pattern matching" and "substitution" to make it look like it was listening.

- It identified keywords in a user's input.

- It applied a transformation rule to create a response.

- If no keyword was found, it provided a generic, non-committal prompt.

It was simple, elegant, and dangerously effective. The genius wasn't in the complexity of the code, but in the simplicity of the design. It proved that humans do most of the heavy lifting when it comes to "understanding." We provide the context, and the machine provides the blank space for us to fill in.

Why We Are Still Vulnerable Today

Modern Large Language Models (LLMs) are exponentially more complex than ELIZA, but the underlying psychological mechanism remains the same. When a chatbot writes with perfect grammar and a polite tone, we feel an urge to treat it like a peer. This is why businesses are increasingly using AI to handle customer interactions.

They know that a polite, "empathetic" sounding bot can reduce customer frustration, even if the bot isn't actually feeling anything. It’s a powerful tool for brand management, but it also raises ethical questions about transparency. Are we being manipulated by a machine that mimics the cadence of a friend?

The Role of Anthropomorphism in Technology

We see anthropomorphism everywhere now. Cars that "talk" to us, vacuum cleaners that have "personalities," and social media feeds that "know" what we want. We are surrounded by systems that are designed to trigger our social instincts.

It’s not necessarily a bad thing to have a helpful assistant. However, we have to keep our guard up. When we forget that we are dealing with a statistical model, we risk giving away more of our personal data than we should. We start treating the machine as a confidant, forgetting that it is, at its core, a data-processing engine.

Practical Tips for Maintaining Perspective

If you find yourself getting a little too close to your favorite AI tool, don't panic. It’s a human response. But, you can take a few steps to keep your relationship with technology healthy and grounded.

- Remind yourself that the AI has no internal state, no history, and no feelings.

- Review the terms of service to understand how your data is being used.

- Keep your sensitive, personal information for human friends and family members.

- Treat the AI as a tool for efficiency, not a companion for emotional support.

These simple steps can help you bridge the gap between enjoying the convenience of modern technology and becoming overly dependent on it. The goal is to use the machine, not to be used by the psychological tricks it inadvertently plays on us.

The Future of Human-Computer Relationships

As we move toward more immersive digital environments, the lines will only continue to blur. We are heading toward a world where AI will be able to mimic human emotion with startling accuracy. This will make the ELIZA effect even more potent. It will be harder to tell where the human ends and the code begins.

This is why we need to talk about digital literacy. We need to teach ourselves and the next generation that just because something sounds human, it doesn't mean it is. The value of human connection is in the mutual vulnerability, the shared experiences, and the genuine, messy reality of life—things that no amount of code can ever truly replicate.

The next time you find yourself chatting with an AI, take a second to pause. Look at the screen and remember the simple 1960s program that started it all. You are talking to a mirror. Enjoy the conversation, use the insights, but never lose sight of the fact that you are the one bringing the humanity to the table.

If you are an online business owner or a tech enthusiast, understanding this phenomenon is key to building better products and more honest relationships with your audience. Don't just build a bot—build a transparent, helpful tool that respects the intelligence of your users. Let's keep our digital interactions grounded in reality, one conversation at a time.

Thank you for reading my article carefully, thoroughly, and wisely. I hope you enjoyed it and that you are under the protection of Almighty God. Please leave a comment below.

Post a Comment for "The ELIZA Effect: Why Humans Form Emotional Attachments to Simple Code"